Sometimes we all need a little help

Moving cube data across scenarios (or entities or time or whatever) can be done via a Data Management Copy Data step or simple api.Data.Calculate statements or api.Data.ConvertDataBufferExtendedMembers. But what happens when there’s a need to perform a data movement and there’s a requirement for complex data transformation?

A business rule approach starts to fail when that sort of manipulation is needed (Yr. Obt. Svt. has done that under duress and really wishes he hadn’t), Copy Data can move and map data across Cubes, Entities, Scenarios, Views, and Time Periods but it’s just a one-to-one mapping; other dimensions are not addressed.

Here’s a leading question: does OneStream have a way to do this that isn’t (generally, although you certainly can go that route if needed) code heavy and is flexible? Or course there is: a Data Management Export Data Step and Sequence and a Data Mgmt Export Sequence Data Source. Whew, that’s a lot of capitalization.

In narrative form, the steps are as follows: create a data export step, include it in a sequence, create a new data source that is said sequence, configure and assign transformation rules that may or may not include, plop all of that into a Workflow Import, and load away. Whew, again. It’s easier done than said.

Exporting

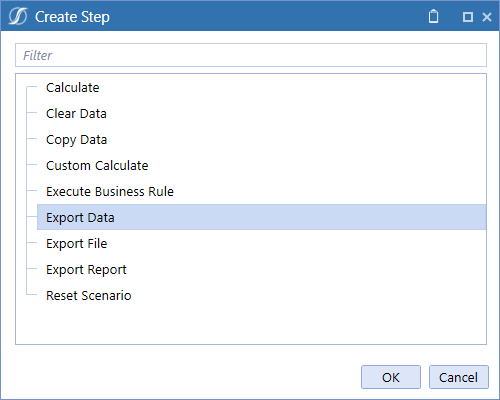

Create a new Data Management Export Data step and perform a few simple property assignments:

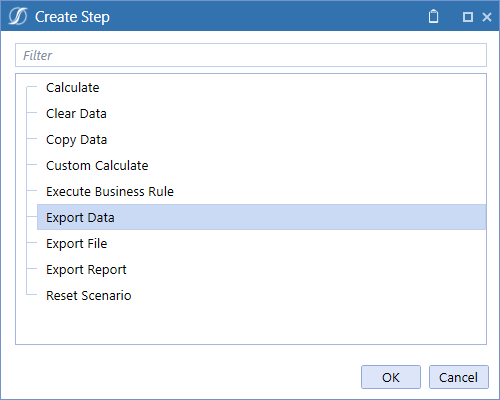

Three rather important property categories

Your choice

You can call the file anything you like. I typically leave this as the default date-based file name, but it doesn’t really matter as this load technique doesn’t look at the file name. If you want to have a history of what the export state was on a date, leave it as is, otherwise feel free to obsess about something likely more important.

Yes, sure, fine

To retain sanity, especially if there are multiple cubes and iterative runs, keep the Include Cube in File Name and Overwrite Existing Files as true.

For the love of all that is good, don’t.

Let me show you what happens if the Entity, Scenario, and Time properties are set to with a very small subset of data.

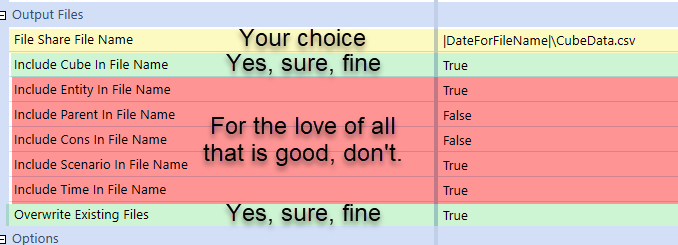

Here’s every bit of 2023 data in A Very Basic Sample

That’s it.

And here’s what happens with Entity and Time included in the file name (assuming T#2023.Base as the period range):

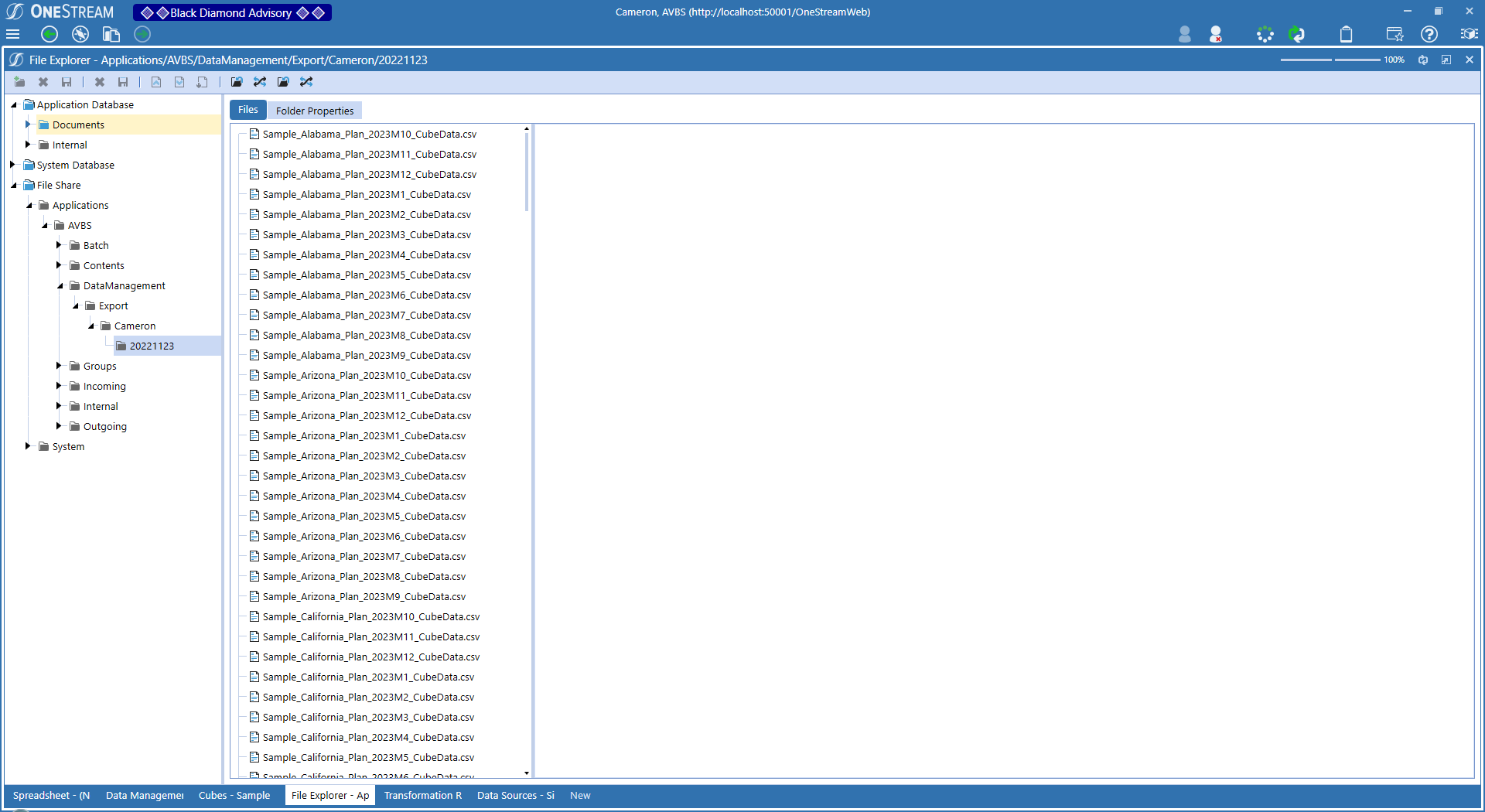

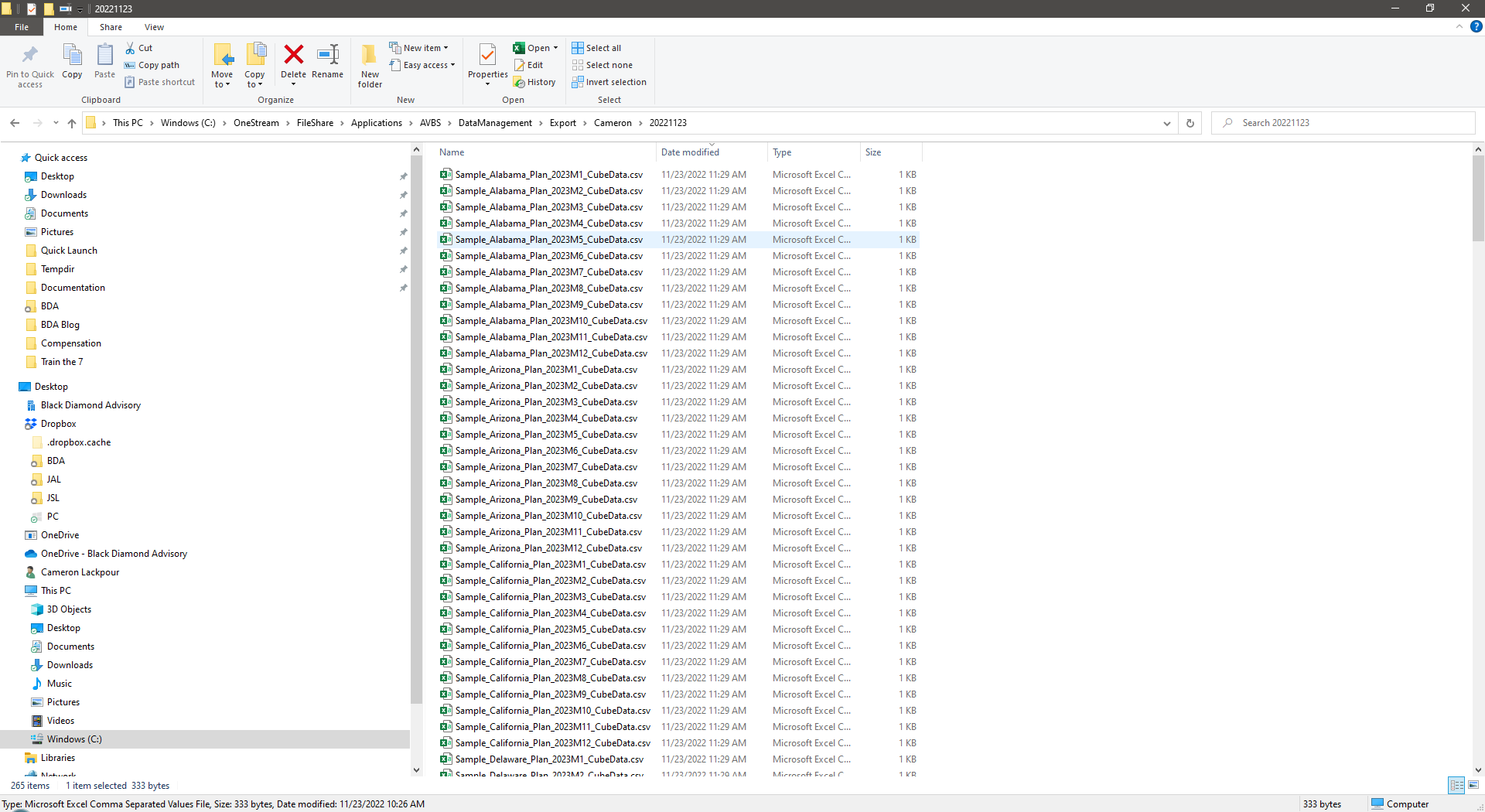

As this is a local install, I can look at this in Windows File Explorer:

That’s 265 files for what is after all very little data. Ugh. And they can’t be deleted via OS’ File Explorer, so cloud customers get to log a Support request. Imagine doing this in a real app with way more information. Superduper bummer. I may have once done this with over 4,600 individual files. But only maybe and definitely only once.

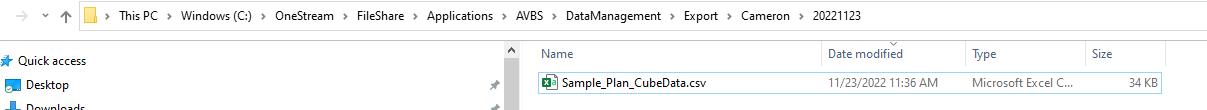

Setting those properties to False, a single file is created:

This is as far as you need worry about the file. It’ll get created every time, in different time-based folders automatically, under whatever username executes the Workflow Import. Easy peasy lemon squeezy.

Addendum

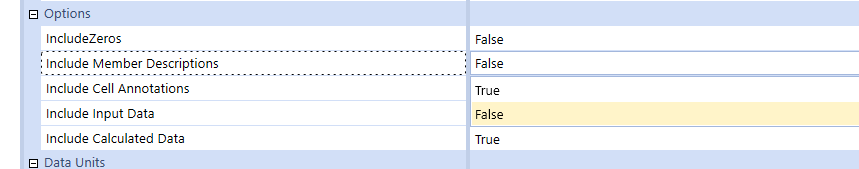

Be sure to sure to set Include Member Descriptions to false as member descriptions can’t be loaded.

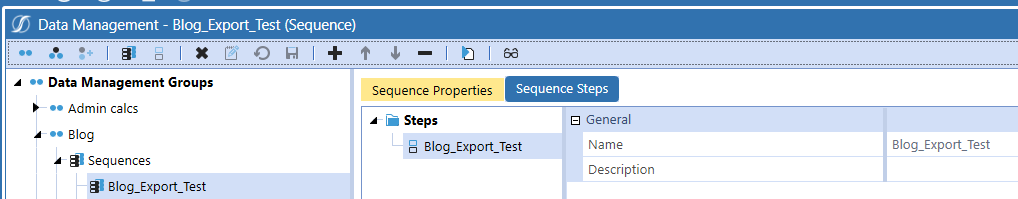

Create a Data Management Sequence

The Data Source looks for a Sequence, not a Step, so create a very short and sweet one of just the export Step:

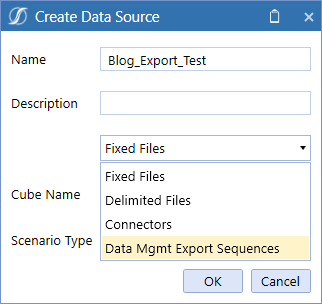

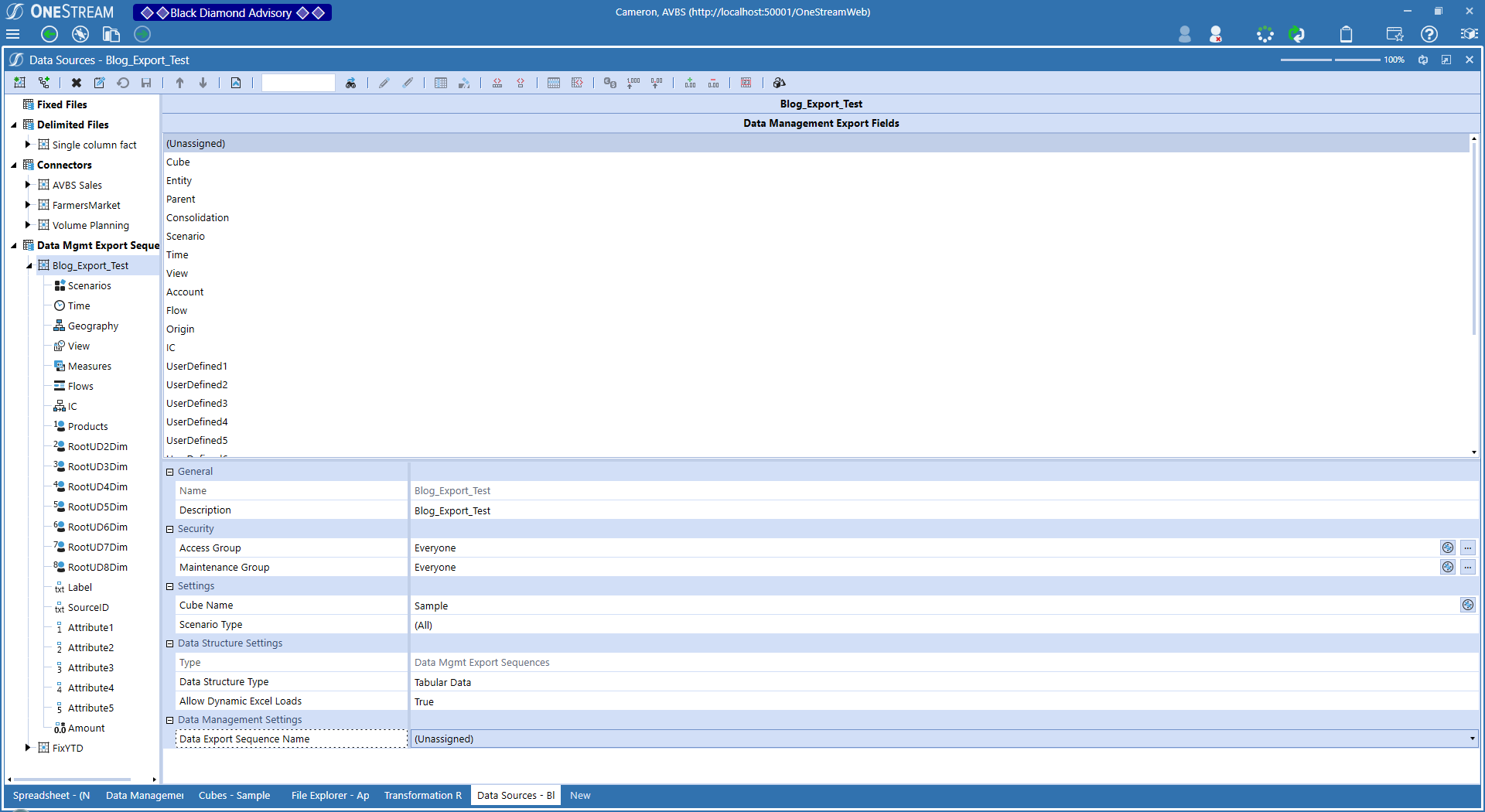

Creating that Data Source

This is magic, it really is.

Create a new Data Source, making its file type “Data Mgmt Export Sequences” with (in my use case) the Sample Cube.

Here’s the magic:

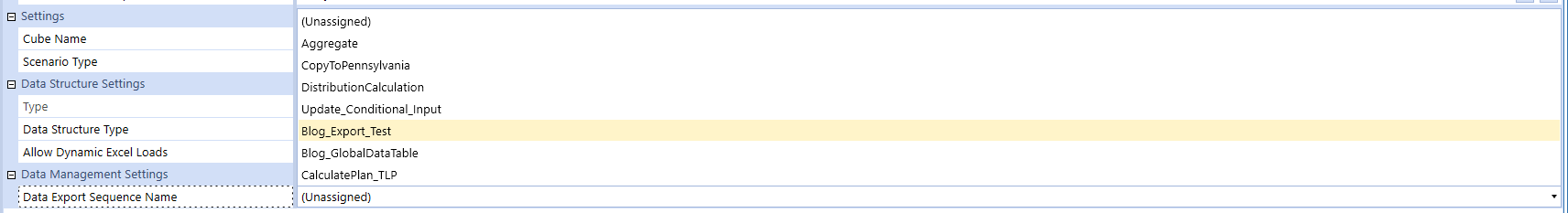

The export is now an import. As noted, magic. Assign that Data Management Sequence to the Data Source property, and Bob’s your uncle, the world (or your exported data) is your oyster:

That’s it for now

Five pages in Word is surely enough for one blog post. Also, I’m lazy. Also, this is written around a US holiday and likely we Little Americans (if there can be Little Englanders, why not the colonies?), i.e., me, will be stuffed with a surfeit of tryptophan and thus not be able to focus on anything too complex. This was the easy prelude, the next part will be quite a bit geekier as I will walk us all through adding Transformation Rules, a sample Parser Rule, a Workflow Import step, and lastly glorious — albeit as always uncertain — triumph when all of this gets loaded into a new Scenario.

Be seeing you.