Map and Transform

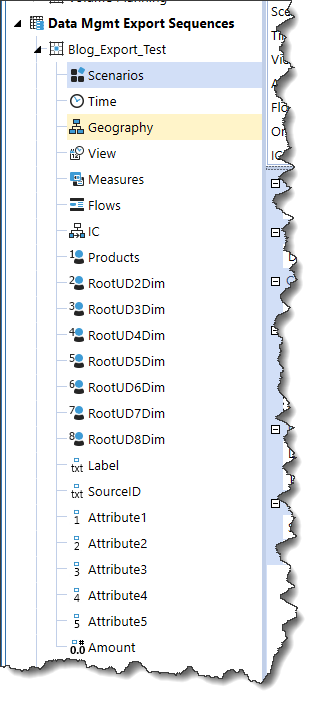

The last thrilling post on this subject ended with a Data Management Export Sequence data source. We must now map its fields to the super-duper AVBS application’s equally super-duper Sample cube. This is just a configuration exercise with no code needed.

I’m only going to show you one or two, lest you die of boredom

Gentle Reader, pray believe me when I write that you need only see a handful of assignments to understand how this mapping works. Even Yr. Obt. Svt. can’t stretch this out to eleventy million pages of screenshots, no matter how lazy I am, although I will note I’ve hit 13 pages in Word so perhaps I can.

But first a note about Label, SourceID, Attribute1 through Attribute5

These fields are not in the export file. I don’t need them, I’m not sure why they’re in the export field list, and I’m going to ignore them. I’ll probably be sorry.

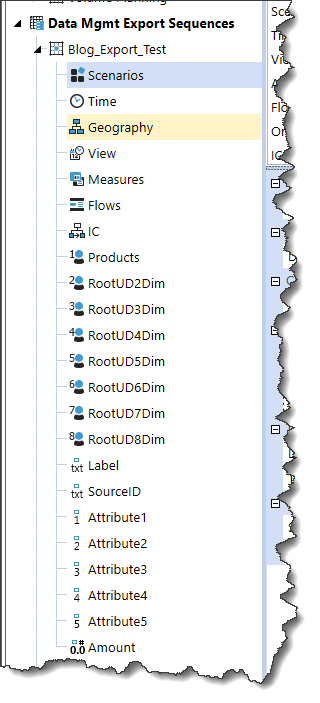

HasData, Annotation, Assumptions, AuditComment, Footnote, and VarianceExplanation

I’m equally not sure what purpose these have in an export – there must be some as there must be with Label, SourceID, etc. – but they are not valued, I am too lazy to figure it out, and I’m going to barge ahead ignoring them. We’ll soon enough find out if this is Incredibly Wise or Stupendously Stupid.

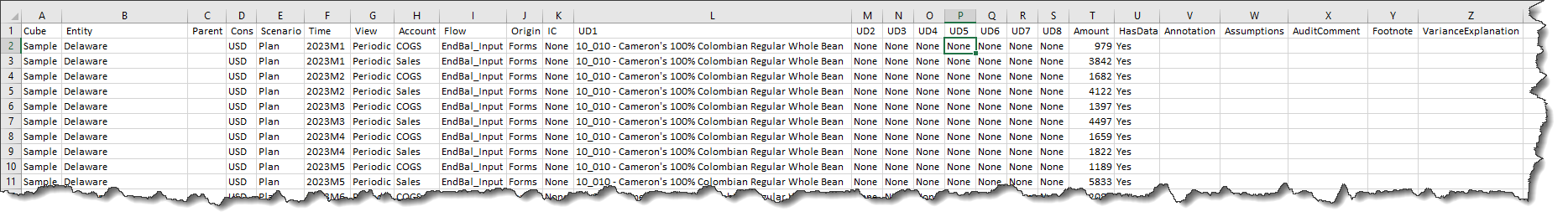

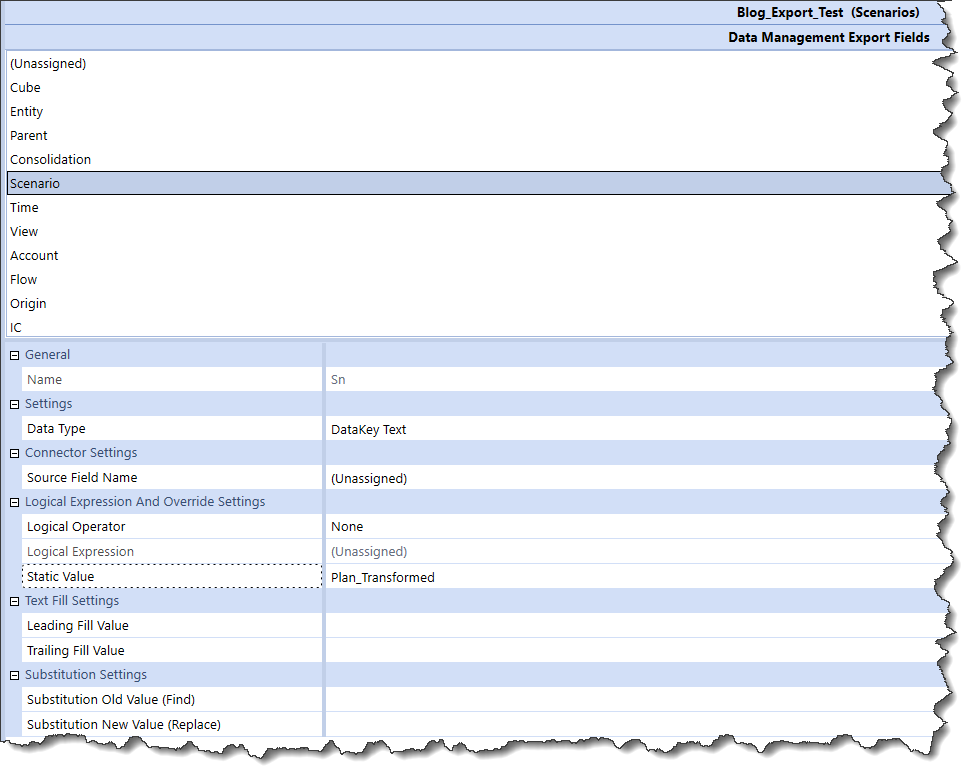

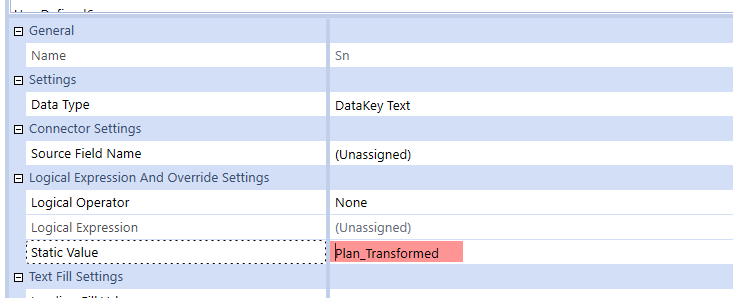

Scenario

My cunning plan for this series is to extract my 2023 Plan data, cleverly transform it (although I have not actually come up yet with what that transformation will be – it will undoubtedly be clever or at least not stupid), and then load it into a Scenario called Plan_Transformed. As that’s the case, I’ll hardcode the Scenario value to Plan_Transformed:

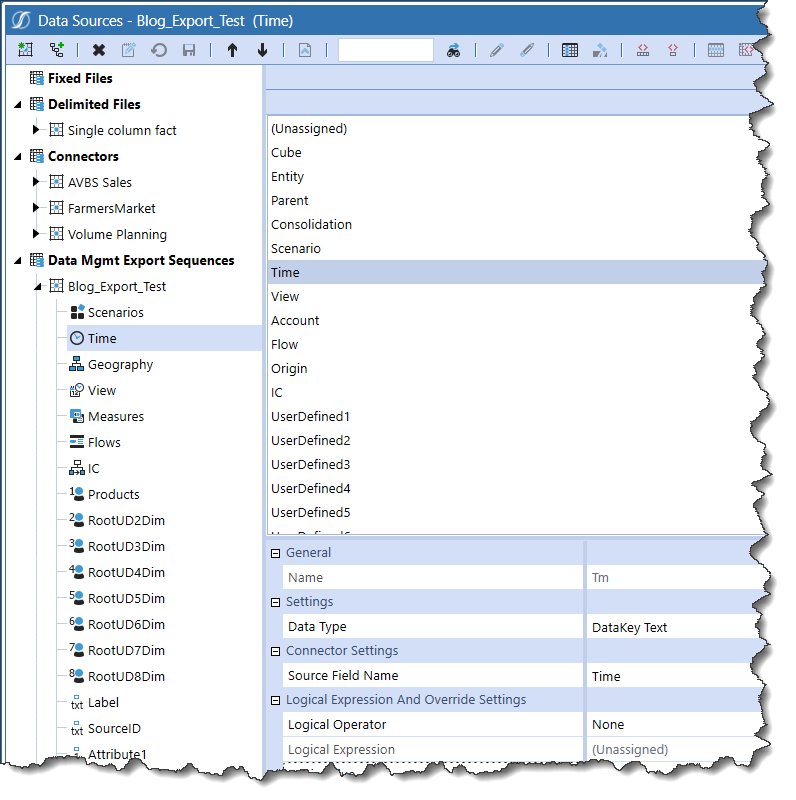

Time and just about everything else

The rest (mostly) of the dimensions are a simple one-to-one assignment. After I get this first go round working, I’ll come back to this and plop in a Business Rule or two into the Logical Operator but for the time being, it goes out as it comes in.

Note that the Source Field Name and the name of the field needs to be selected in the field list to get the assignment to stick. Why it has to be selected twice is best left to the OneStream gods/someone who better understands all of this.

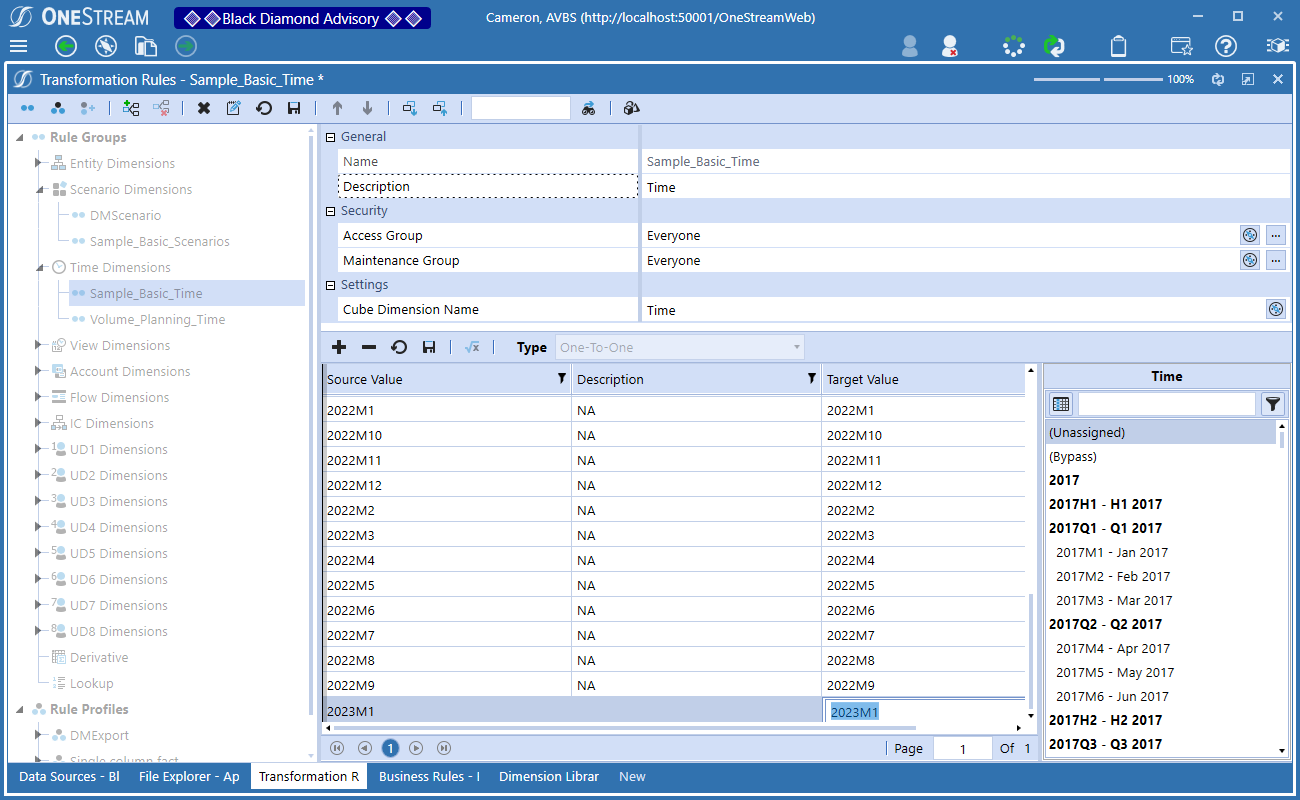

Moving on to Transformation Rules

As I noted, all of the other dimensions, Time through RootUD8Dim and Amount are mapped. However, OneStream requires mapping rules – even if there is zero difference between that data as found in Data Source and the target members/dimensions – in the form of Transformation Rules. Given the simplicity of the use case, these will almost certainly be * to * mappings with the exception of Scenario and Time.

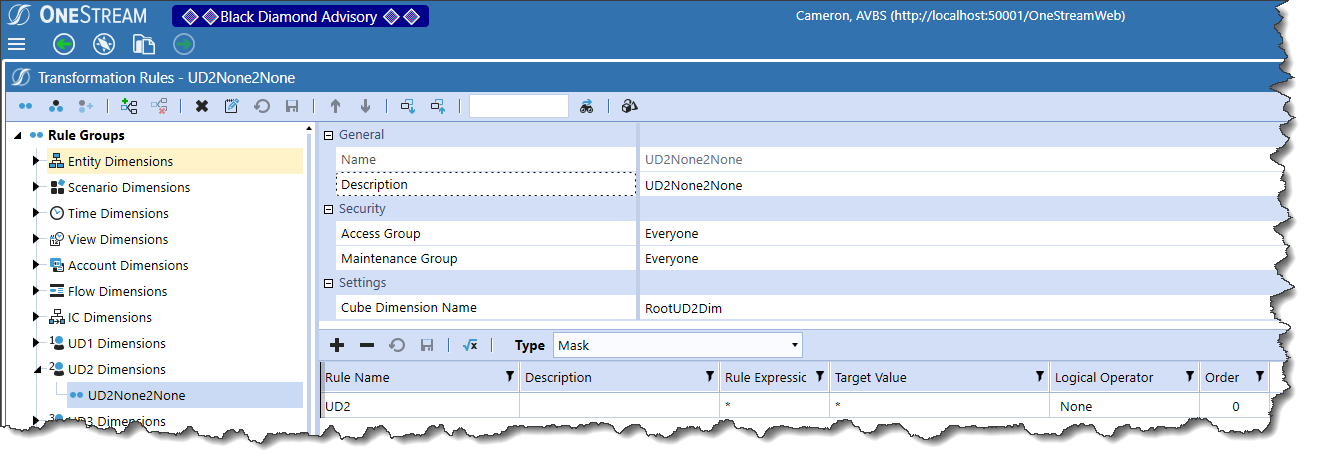

Mapping every dimension

While not strictly required, this example will show the full mapping of all dimensions. Most of them save the last two mentioned above are just like UD2’s:

A mask transformation really is no kind of transformation at all: whatever comes in is what gets loaded to the cube.

Scenario and Time

Neither of these dimensions support Mask mapping nor indeed Composite, Range, or List; only One-to-One can be used.

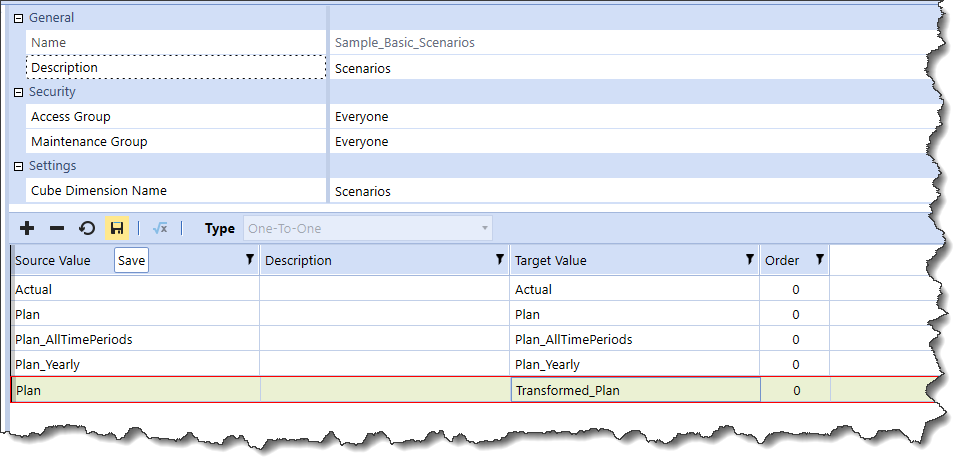

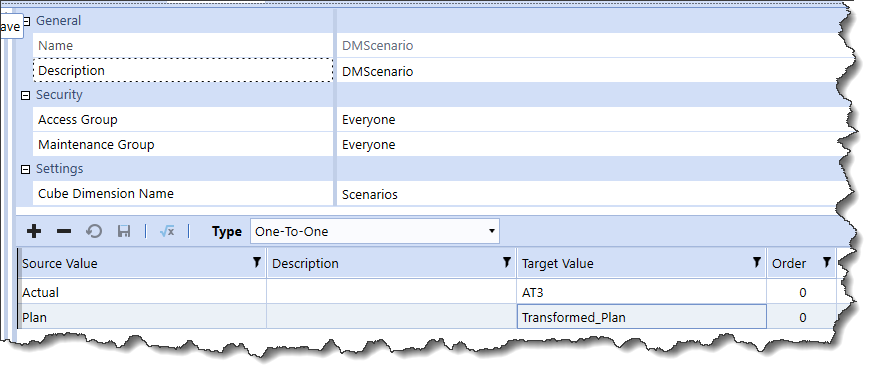

Scenario

In the case of Scenario, this is as one might wish: Plan will be mapped to Transformed_Plan. Note that I cannot use the existing Sample_Plan_Scenarios Transformation Group because Plan is already mapped to Plan (remember what I wrote about the mapping requirement even when the member names are exactly the same).

I can either create a new group or use a different one that doesn’t include a Plan->something and then define that mapping:

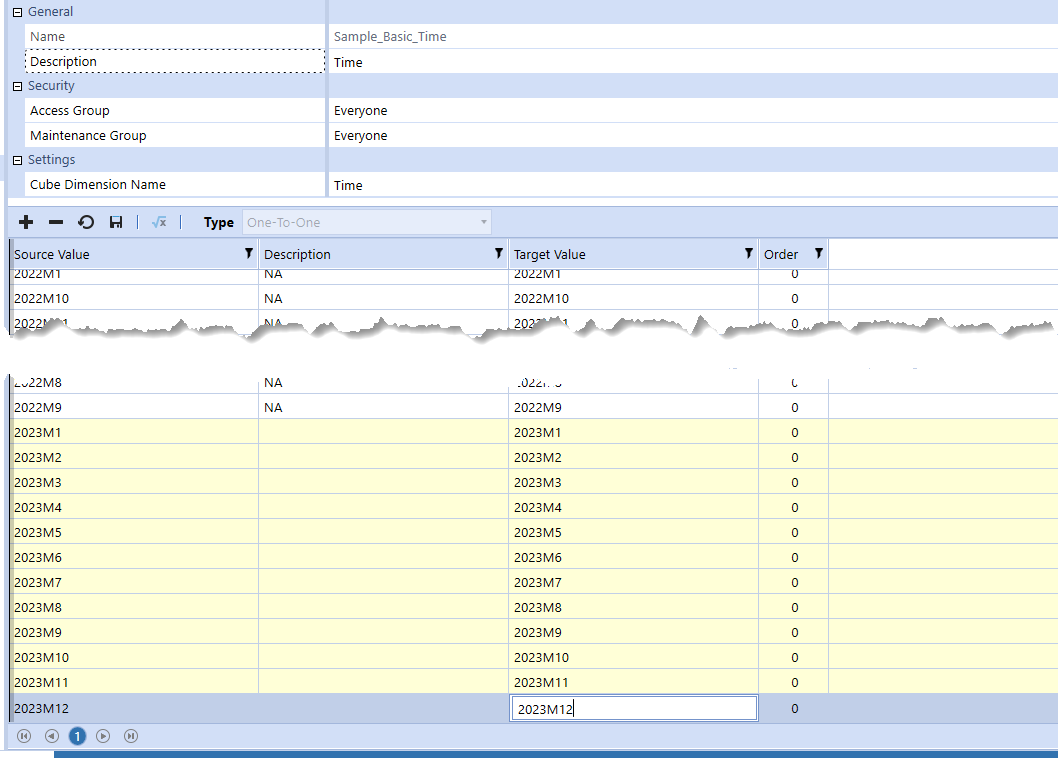

Time

The existing Time mapping must be expanded to support 2023. As with Scenario, only a One-to-One mapping is supported. I’m sure there’s a good (maybe even great) reason, but I have no idea what that might be.

That leaves me with this:

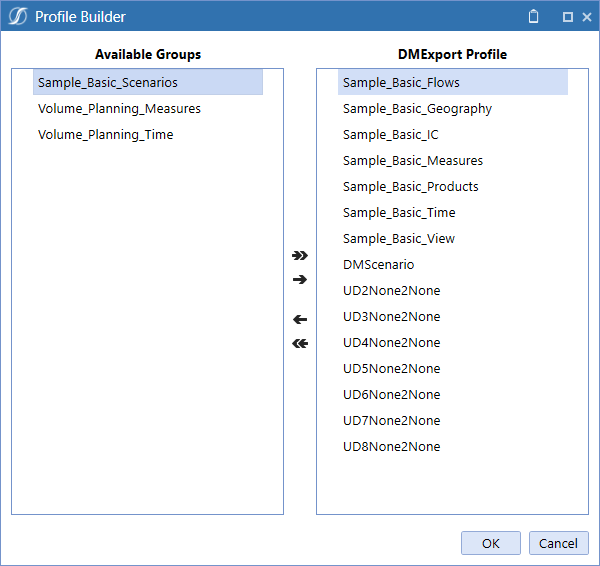

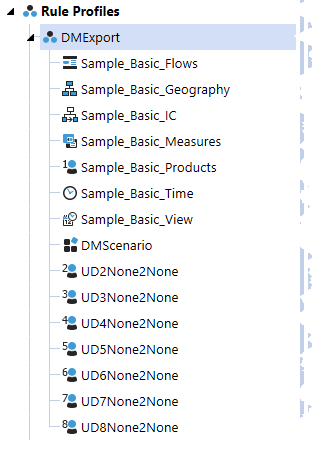

Rule Profiles

Once the Rule Groups are complete, they need to be brought together into a single Rule Profile. There’s an existing one called DMExport that I will expand for this blog post. I’d be awfully careful about doing something like this in a production (or even development) environment unless you are 100% aware of potential impact:

A simple movement across defines the expanded DMExport Rule Profile:

Workflow Profile

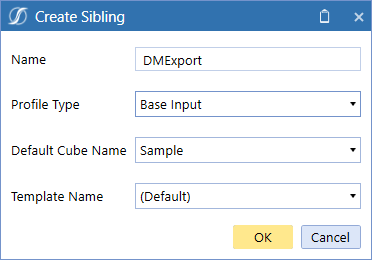

A Workflow Profile Base Input Profile Type will bring the Data Source and Transformation Rules together so that they can be loaded. As I am not particularly creative, I’ve named this Base Input Profile Type as “DMExport”.

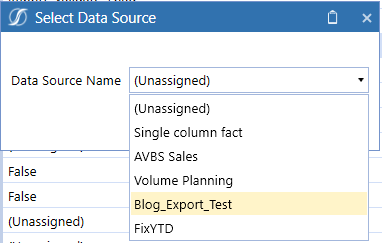

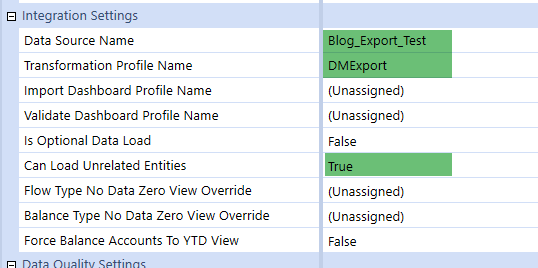

The Import Workflow Profile Child (see this post on Workflow nomenclature) will be used to, unsurprisingly, import to Transformed_Plan. To do that I need to assign the Data Source Name:

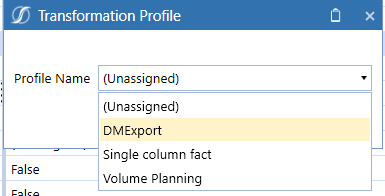

And the Transformation Profile Name:

I am a rebel (and thus care not a whit about Workflow constraints) and also this is all for a blog post, so I’ll set the Can Load Unrelated Entities property to True. If I didn’t do that, I’d have to explicitly assign the Entities that this Workflow Profile Type can load to.

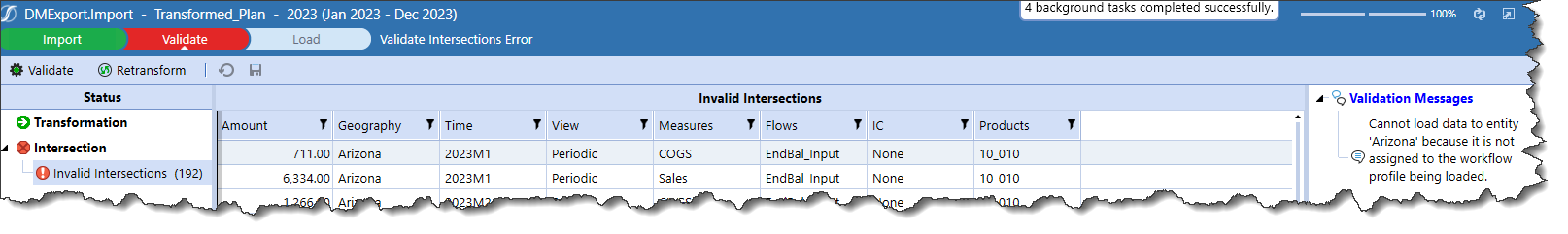

If I don’t use that setting and if I don’t explicitly assign the relevant Entities, disaster strikes:

Let’s avoid disaster. Here’s (hopefully) the required Integration settings:

The proof of the pudding is in the eating or is that the loading?

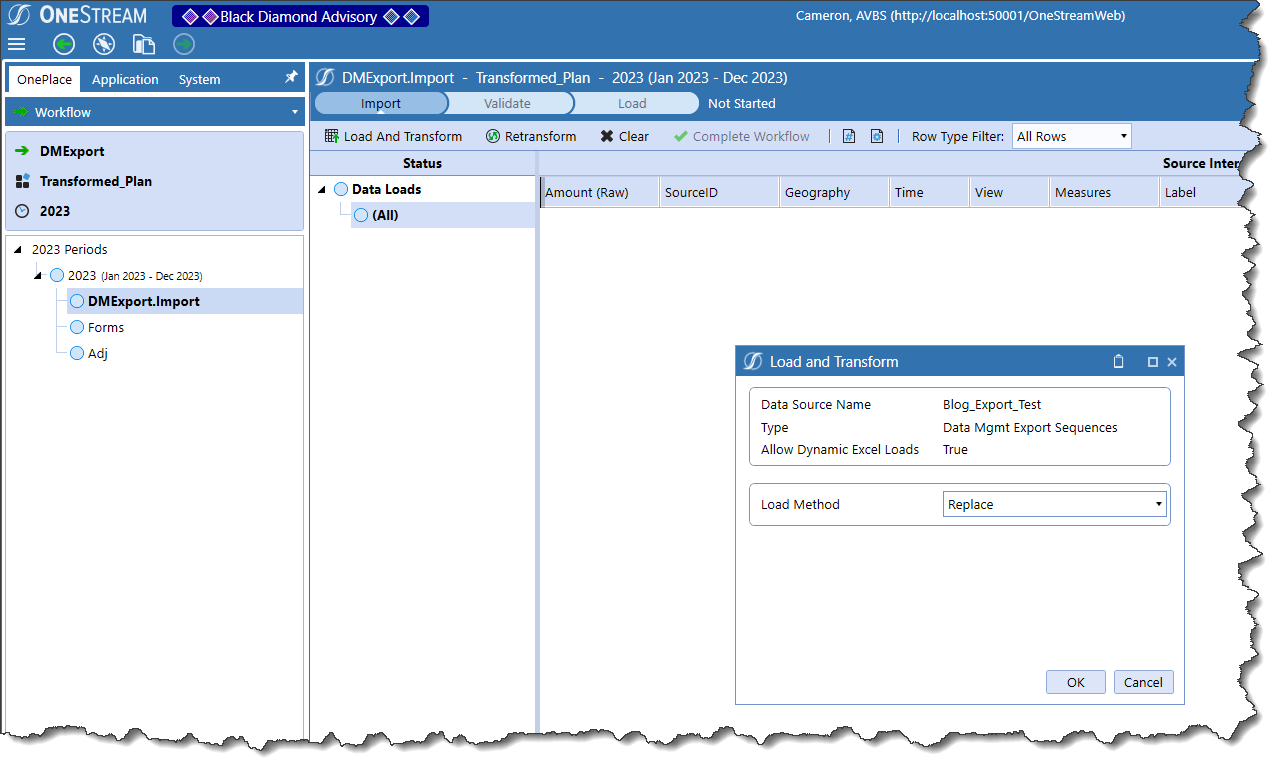

Notice that there is no file to point at – this is all running through that Data Management Step export process.

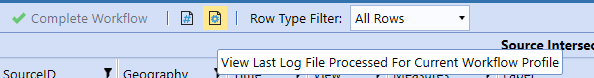

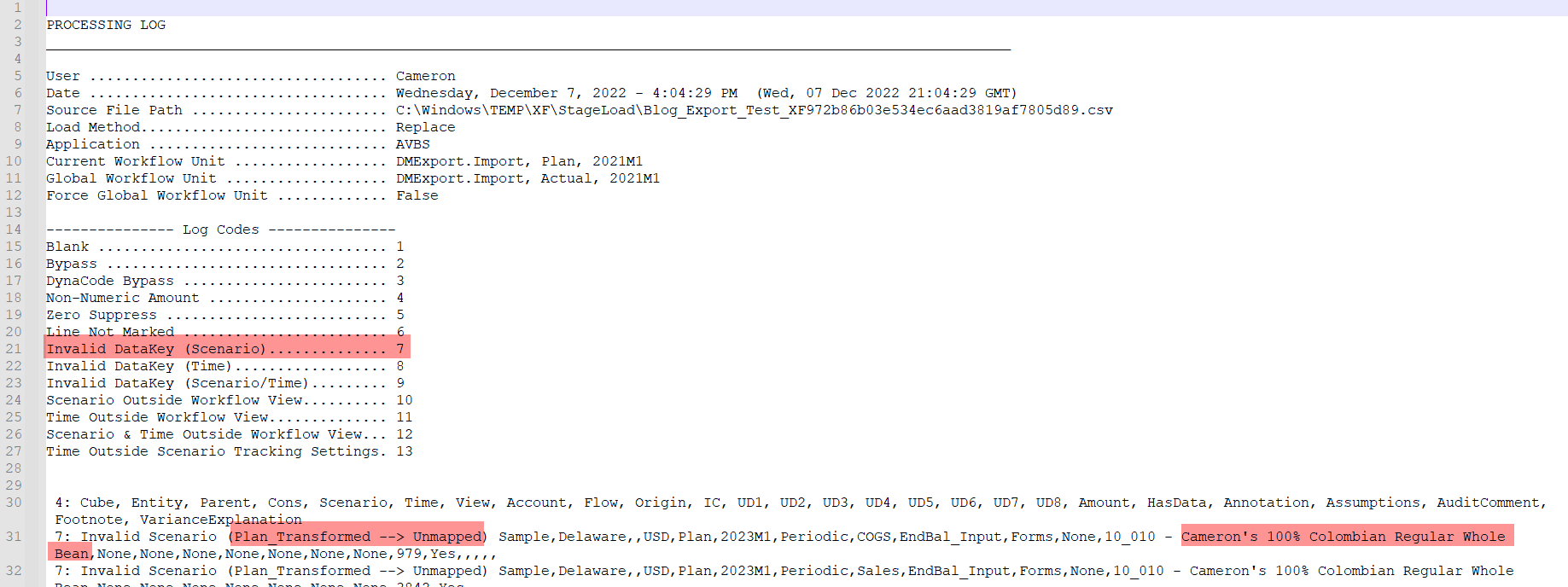

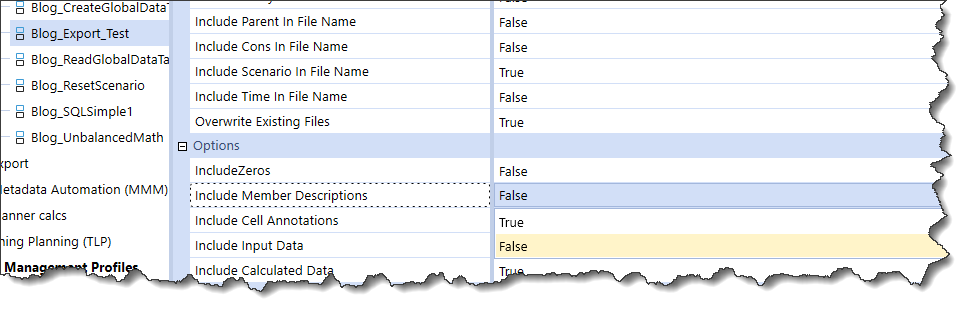

And KABOOM! Failure, but why? OneStream helpfully stores the last log file. Big Whoops, I have a bad Scenario. I also have member descriptions in my export.

The former error can be corrected by getting rid of the Static Value “Plan_Transformed”? Why, when that string matches the Scenario? It’s because I didn’t put it into the Scenario Transformation Rule. Great Googly Moogly, I give up with clever, for now at least, and will simply map it as per the other dimensions.

And let’s go get rid of those member descriptions in the Data Export Data Management Step:

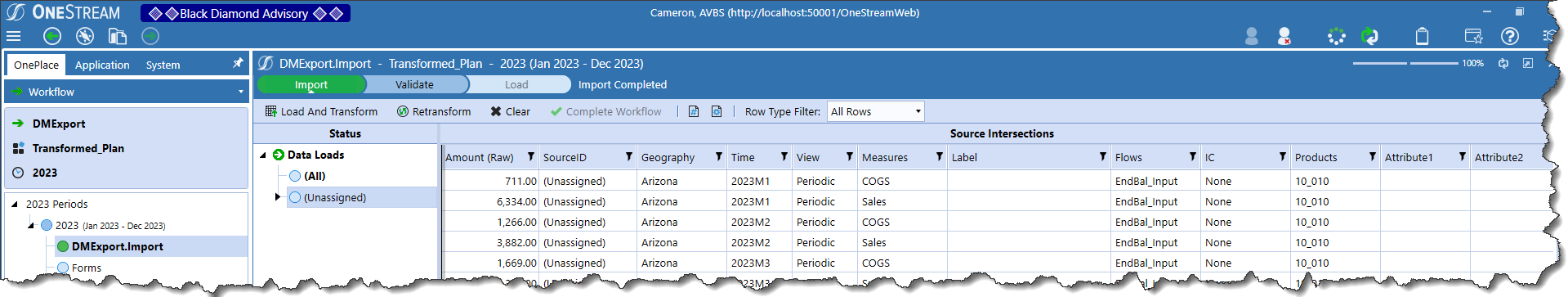

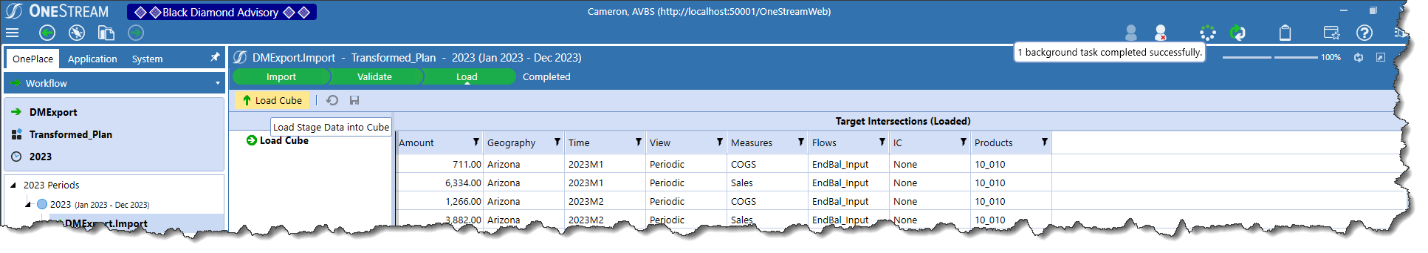

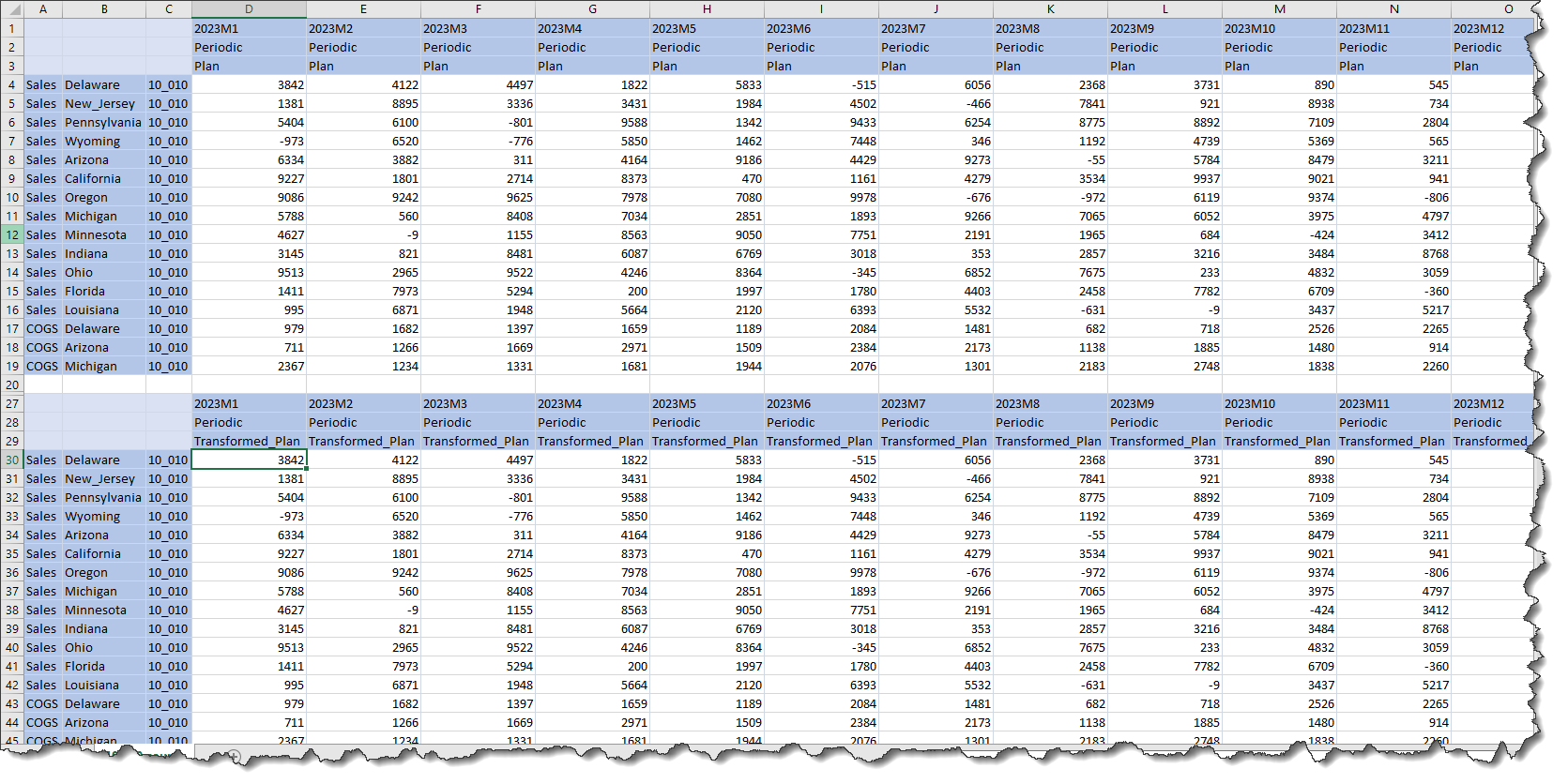

Give it all a whirl again, and What-ho!, it seems to have Imported to Stage:

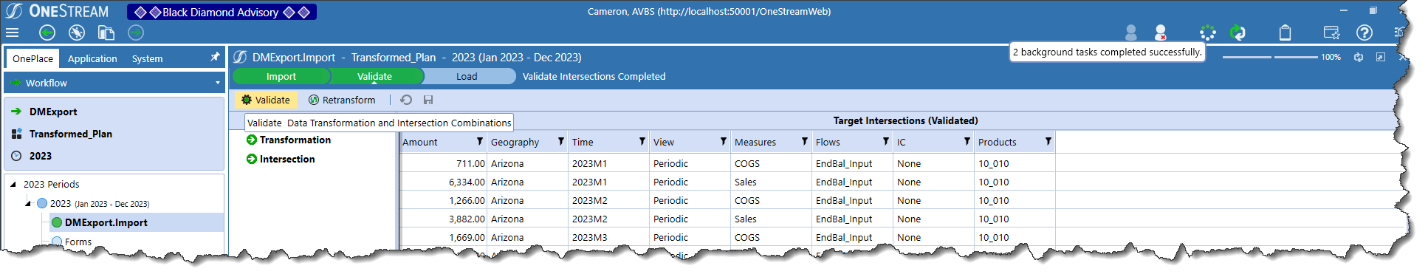

And it validates:

And it loads to the cube:

Does it? Really?

It does.

Wait, there’s more?

Yes, yes, as a matter of fact there will be. Beyond a smattering of transformations, I want to explore the different kinds of data loads, e.g. Replace/Replace (All Time)/Replace Background (All Time, All Source IDs)/Append as well as what it takes to actually clear cube data on load. Exciting (well, I think so) stuff.

Be seeing you.