A Financial Analyst Perspective

Working with OneStream software for the first time, coming from a financial analyst background, I was always a bit confused by how data was stored for the reports I was creating or the hacked-together solutions I was deploying through OneStream reporting objects. I was familiar with data warehousing concepts and had worked almost entirely within a Kimball-designed data warehouse, creating Power BI reports, throwing data into Excel sheets, crunching data in Python, but when I switched over to OneStream’s cube-like data solutions, it didn’t really quite click as to how to manipulate data within OneStream until I saw the SQL tables under the hood.

It’s probably also worth noting that when you start out as a financial analyst, you usually start to understand the OneStream application backwards because usually the entire system is already built by the time they even let you in to mess around with things like Cube Views or Report Books. So hopefully, this perspective is at least somewhat relatable to those in similar roles.

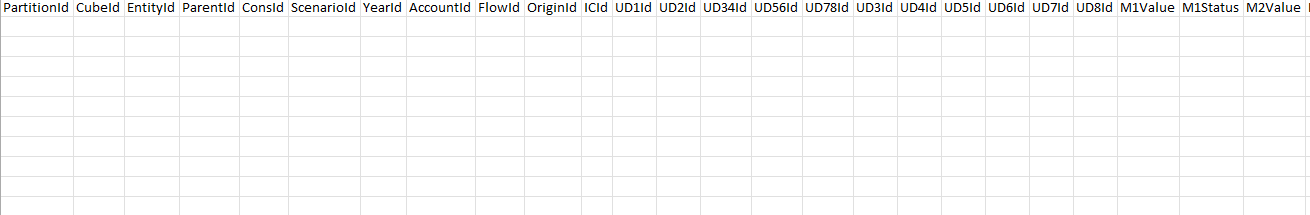

Essentially, data within OneStream – except for dynamically aggregated results – in its purest form is stored in data records in MS SQL Server that use a “DataRecordYYYY” naming convention, where YYYY is the corresponding year that the data is recorded in. Each row in this table corresponds to all 18 dimensions as columns, along with additional columns for M1, M2, … , M12, coupled with values and statuses.

Something like below:

The extra column PartitionId is what gets used by OneStream’s in-memory engines to split up processing by EntityId

Disclaimer: I am assuming you know how api.Data.Calculate() works as well as how to write Member Filters

So with that in mind, let’s look at an example of when you run an api.Data.Calculate() function within a business rule or stored formula:

api.Data.Calculate("A#SomeAccount = A#SomeOtherAccount")

When you run this api.Data.Calculate() function above through a business rule or stored value formula, OneStream does some finaggling in the background (using functions that manipulate the data in-memory) that looks at all existing instances of A#SomeOtherAccount and sets A#SomeAccount to A#SomeOtherAccount’s values for the data unit that you’re running the function for (where a Data Unit is defined as Cube, Entity, Parent, Consolidation, Scenario, Time).

However, a data unit only covers some of the columns in the SQL table – the full listing of dimensions are the following:

- Cube

- Entity

- Parent

- Consolidation

- Scenario

- Time

- View

- Account

- Flow

- Origin

- Intercompany

- UD1 – UD8

For the above api.Data.Calculate() example, were it run through a Custom Calculate Data Management step to run a Finance Business Rule (with only the Data Unit defined and POV left blank), then the function will go out and look for every single row in the appropriate DataRecordYYYY table (again, for the relevant data unit) and do a calculation for A#SomeAccount using A#SomeOtherAccount’s values for all the other dimension combinations where data exists.

The important bit here, is it will only grab the dimension combinations where data exists – so for every other dimension combination that has no data, OneStream’s finance engine will not go through the entire database and copy over zeroes if the data does not exist. To riff on this a bit more – api.Data.Calculate() can only see rows within the relevant “DataRecordYYYY” table when doing comparisons. This limits data size in the tables, improves performance by observing sparsity and results in meaningful results

As a financial analyst, if you were tasked to create some calculation and fill data for some intersection, it is extremely important that you understand what base members you’re doing the calculation for. Because if you left everything wide open like above and you don’t really have a fundamental understanding of how the data is structured, you could end up creating a ton of data accidentally. In the case above, the finance engine will quite literally grab every single data point related to A#SomeOtherAccount for the relevant data unit and create the necessary intersections for A#SomeAccount within the SQL table (which may or may not be what you wanted to happen; but obviously, if it’s something that you’re trying to do intentionally, then go for it).

To harp on about data existing, I mean that the specific dimension combination would need to have at least one value populated for the year.

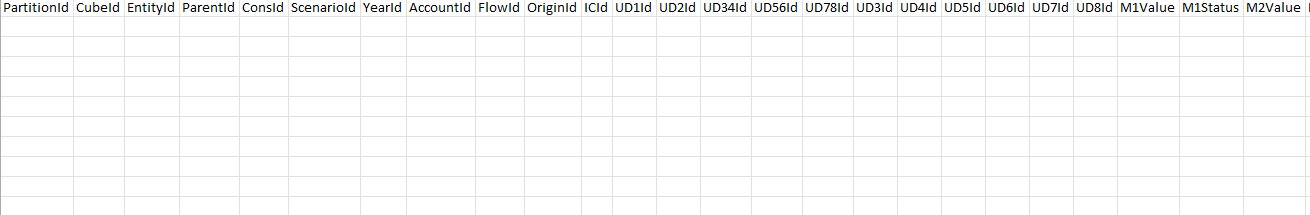

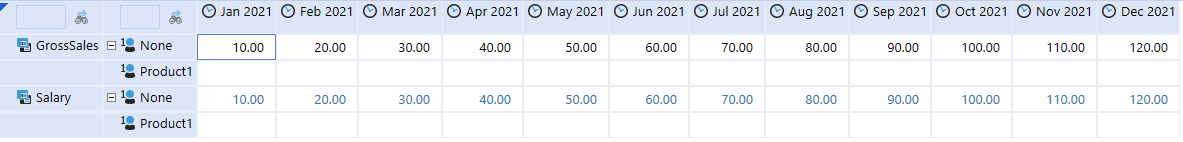

So, to continue with the api.Data.Calculate() example – let’s say I wanted to copy all the values in A#GrossSales to A#Salary shown in this cube view below (for some weird reason):

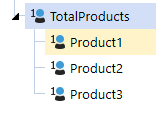

The second row level in this cube view is a UD1 that has several products and only serves a purpose in throwing some data in a different dimension combination specific to this example:

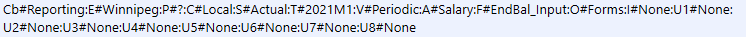

Now, If I ran this code within a finance business rule (for the relevant data unit within this cube view):

api.Data.Calculate("A#Salary = A#GrossSales")

This is what happens:

For those values to copy over to A#Salary:U1#None, data must have existed in A#GrossSales:U1#None and if they didn’t then nothing would get copied over. Immediately upon copying, a corresponding row in the relevant SQL table shows up with all dimensions specified as well as values populated.

If I look at the cell POV for the T#2021M1 entry for A#Salary:U1#None in this cube view:

These exact dimensions would be (must be) populated in the SQL table (as MemberIds, not names) along with every M1-M12 value with a corresponding status. You can check yourself in System -> Database if you tried this on your own instance.

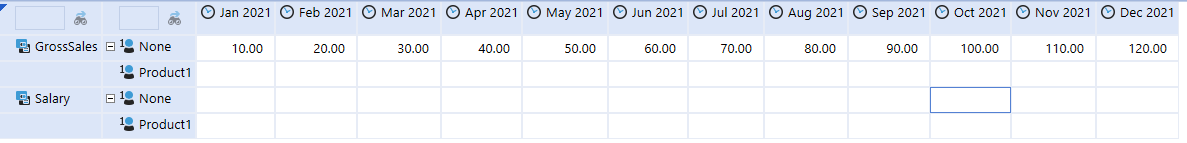

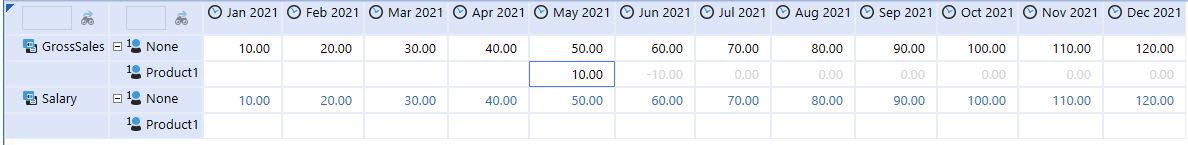

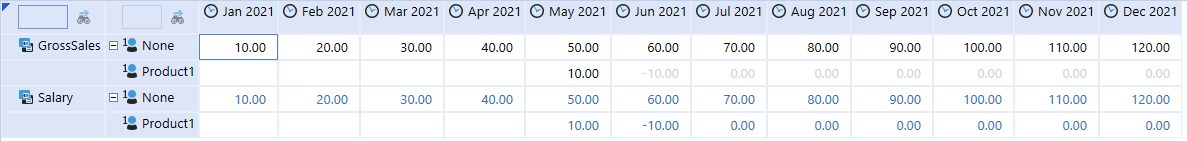

Moreover, suppose I wanted to copy some data from A#GrossSales:U1#Product1 to A#Salary:U1#Product1 where data was sparsely populated like below:

In this case, the time periods that show no data will be thrown into the database as zeroes with a cell status of ‘No Data’ for January to April. From June to December, zeroes will also be put in its place, but instead with a status of ‘Calculated/Derived’.

Since those values are tagged with a ‘No Data’ status, they will show up as blanks in the cube view, for the other months that are in the database as zeroes, but with a status of ‘Calculated/Derived’, they show up as grey:

This is a fairly long example using api.Data.Calculate(), but this entire process of OneStream throwing data into respective DataRecordYYYY tables isn’t exclusive to just this function – it’s what happens for everything and anything that is involved with storing cube data in OneStream (not to be confused with stage data, those have their own tables). Another example would be through the input of data using the Forms Origin from a Cube View specifically setup for data entry – the same kind of thing happens.

Moreover, just because this data record table exists as a SQL object doesn’t mean you can just update M1-M12 values and statuses with an update SQL statement. OneStream deals with consolidations (and really any calculation in the application) using its own calculation engine by referencing the data record tables and there’s more to it than just updating the data record table. It’s also advisable to never do this to any of the formally created OneStream SQL tables unless you absolutely know what it will do within the system, just experimenting on data like this will most likely corrupt your application. Feel free to try and break things on your own experimental application though; in fact, I actively encourage you to do so because some of this stuff just isn’t documented as well as it should be.

Warnings aside, understanding how OneStream stores its data (even on the surface like this) definitely gave me a better mental model of what was going on in the background whenever I imported data, saved it through a form, or calculated it through a business rule. I’m the type of person that needs to understand structures at their most granular level when designing solutions, so visually seeing how the data sat in the SQL database not only made it easier to design processes through business rules, but it also gave me a grounding of how I should be thinking about OneStream’s data in general (so as to combat situations where I have no idea why data isn’t showing up when I plop Member Filters into Cube Views or why data that I calculated isn’t as I expect).

There’s way more to talk about when it comes to OneStream’s data, but this should be a good starting point for those just getting into it.